- 10 November, 2022

The Hype Around Semantic Layers: How Important Are Standards?

There are several reasons why the notion of semantic layers has reached the forefront of today’s data management conversations. The analyst community is championing the data fabric tenet. The data mesh and data lake house architectures are gaining traction. Data lakes are widely deployed. Even architectural-agnostic business intelligence tooling seeks to harmonize data across sources.

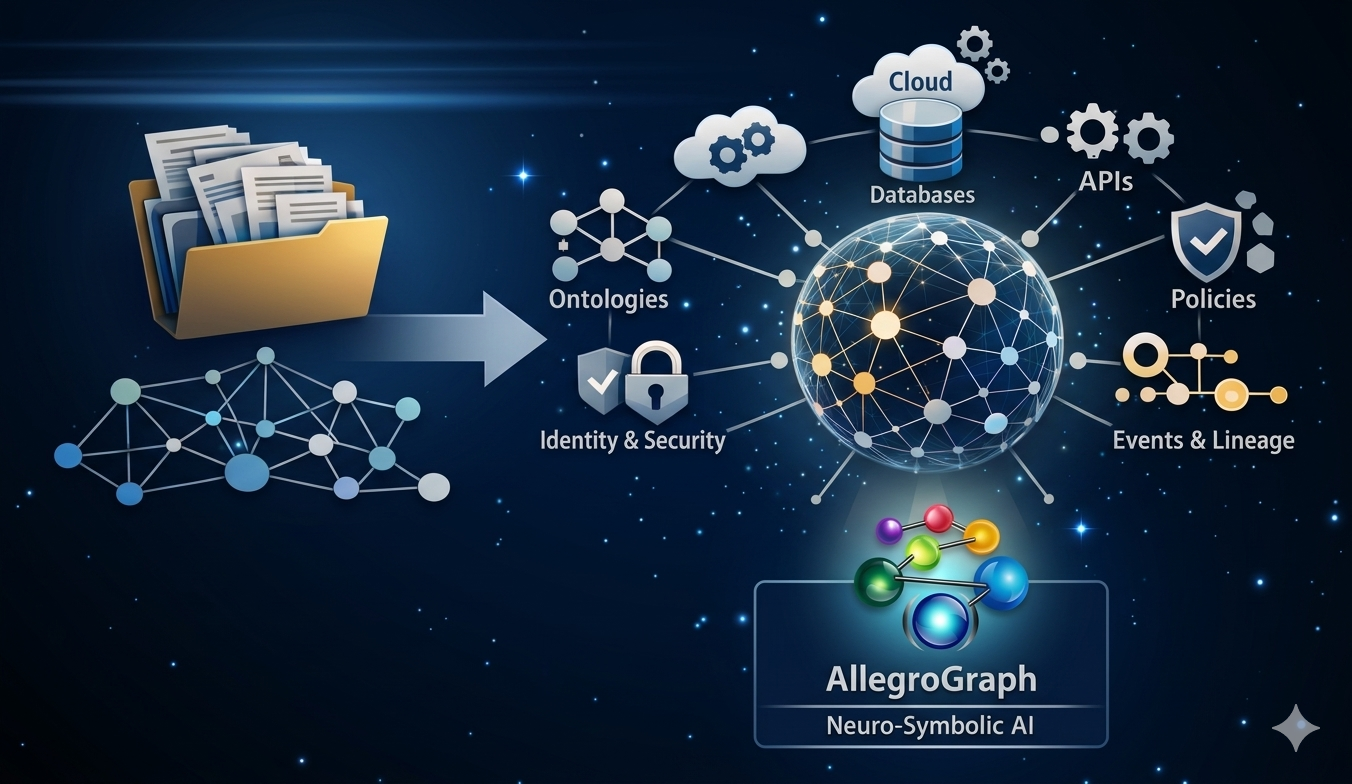

![]() Each of these frameworks requires a semantic layer to ascribe business meaning to data – via metadata – so end users can understand data for their purposes and streamline data integration. This layer sits between users and sources, so the former can comprehend data without knowing the underlying data formats.

Each of these frameworks requires a semantic layer to ascribe business meaning to data – via metadata – so end users can understand data for their purposes and streamline data integration. This layer sits between users and sources, so the former can comprehend data without knowing the underlying data formats.

Additionally, a semantic layer must incorporate a digital asset knowledge graph for a unified description of data assets in all sources – like those feeding data lakes and data lakehouses. This catalog is especially important for identifying what data is in unstructured data sources, relational databases, streaming data, document stores, and other sources for data fabric or data mesh deployments.

Some “semantic layers” use non-standard, proprietary technologies to store metadata. This approach prevents the use of industry-wide ontologies like FIBO, (financial services), SNOMED (medical), SCONTO (supply chain), OBML (life sciences), CDM-Core (manufacturing), GoodRelations (e-commerce), or SWIM (aviation). It also complicates future data integration and reinforces vendor lock-in.

Conversely, semantic layers implemented with W3C’s Semantic Technologies are based on open-source standards that complement an organization’s existing IT infrastructure. They future-proof the enterprise, prevent vendor lock-in, and provide a uniform view of all data (regardless of differences in formatting, types, and structure) that’s optimal for data integration, data governance, and monetization opportunities.

Read the Full Article at Dataversity.